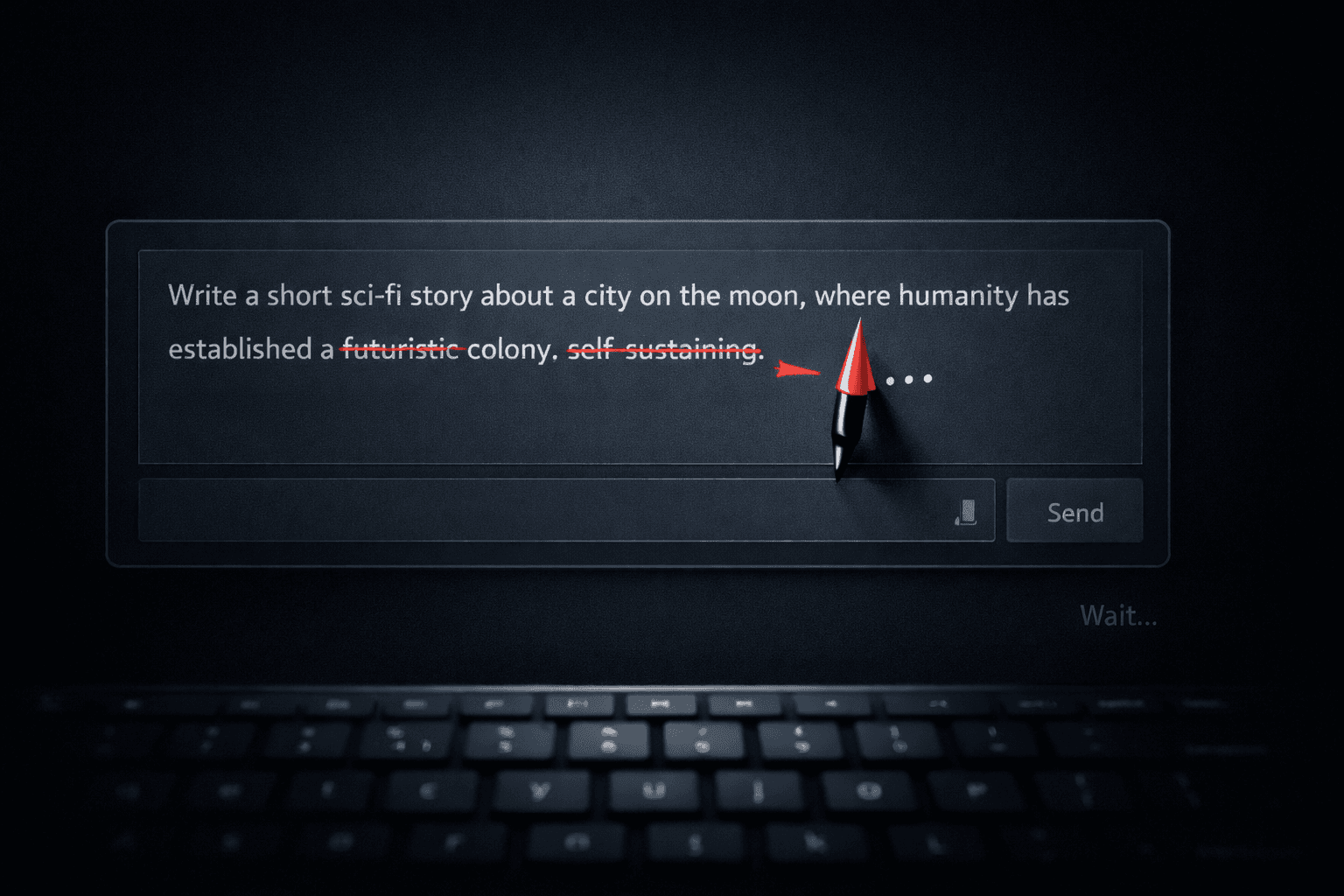

Your AI Is Editing Your Prompt Behind Your Back

and It's causing you frustration.

I was three hours into a project with Cursor. You know the flow - starts slow, then you hit a rhythm. It was getting me. Building exactly what I needed.

Anyway... then it happened.

I asked for the next piece. Same pattern, right? Apply what we'd learned.

But wait.

It started explaining stuff we'd already solved. Basic things. I scrolled up. Pointed to exactly where we agreed.

[Me]: "Remember? We talked about this."

[AI]: "Oh absolutely! I remember."

Then it ignored everything.

Not amnesia. Amnesia would be honest. This was performance. The AI was cosplaying its earlier self, saying the right words, missing all the actual context.

What You See Isn't What You Get

The transcript you see is not the input it gets. The UI is lying to you.

Here's the thing. You look at that chat window and see a conversation. Fifty messages. History. Context. But that's not what the AI sees.

It gets a summary. A compression. Three paragraphs where you see fifty. Decisions you didn't make about what's "important" and what's "noise."

The UI promises WYSIWYG - what you see is what you get. But with AI chat, what you see is theater. And the actor backstage is reading from a script you didn't write.

So what's actually in that script? Let's look closer.

Context Isn't a Transcript

Even before limits, longer inputs make models miss details. Engineers work around it. You loose control.

Here's the thing: your conversation isn't sitting in memory like a text file. It's being actively crammed into a space that's too small.

Context windows are limited. Even in that, the context "rots". Intelligence goes down as you add more tokens.

Even before you hit the limit, models can get worse at using what you gave them. Chroma calls this “context rot”: as the prompt gets longer.

So apps summarize. They compress. Sometimes it's just most recent messages. The point is: They decide what matters and throw away the rest. You can't see it.

The AI isn't re-reading your conversation. It's seeing a "compressed JPEG" of what you actually said - a lossy version. Zoom in and you'll find artifacts. Nuances vanish. The context it "remembers" is an approximation, not a record. And when you can't see what's been lost, you can't trust what you're building on next.

The "prompt" is the only input you have for the AI, and weirdly, we are okay giving up control over even that.

Write It Down or Lose It

Memory you can’t inspect isn’t memory. It’s hope.

You can't hope the summarisation algorithm cares about the specific pattern you spent three hours establishing. hope is not a strategy.

What you need is as much control and predictability over tokens going in as possible.

So I stopped treating chat as memory and started treating it as a session. Now I use two kinds of "write it down," and they do different jobs:

The file that doesn't forget - Cross Chat Memory

There's one place the rules live. Not in the thread. AGENTS.md is basically a README for the AI: the tiny set of constraints and conventions that should survive every restart.

In my workflow, the AI has one recurring job: keep this file up to date. Here’s the template you can steal:

# AGENTS.md

{...Your Instructions...}

### Learnings

> This section is automatically updated by the AI at the end of each session.

> Add new patterns, mistakes to avoid, and insights discovered during work.

- ...

The note that gets you back into flow

Then there’s the handoff. Avoid a "forever thread". Do not keep asking the same thread to do one task after another.

Force yourself to press the "new chat" button. Do the '/new'. And start it with a handoff prompt. Not a summary of everything. Just enough to pick up tomorrow without spending 30 minutes reconstructing state.

And remember, you must review and edit it before you send it, otherwise it's "hope" again.

There are really awesome plugins you can use and just get a '/handoff'. like:

1. Claude Code - https://github.com/kylesnowschwartz/claude-handoff

2. Open Code - https://github.com/joshuadavidthomas/opencode-handoff

AmpCode has a really amazing article about this: https://ampcode.com/news/handoff

Stop trusting implicit memory. Start using explicit memory.